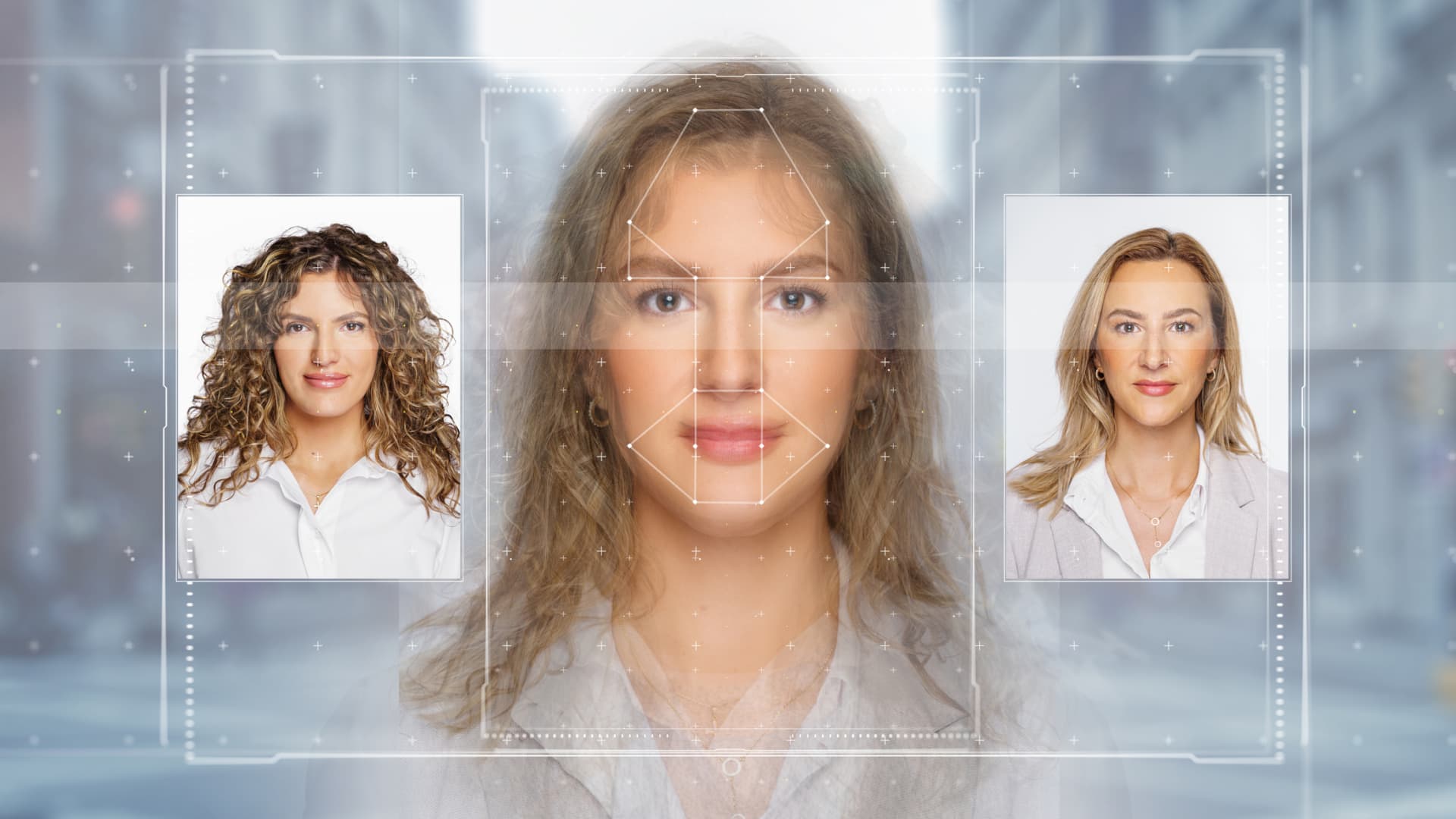

2024 is set to be the biggest global election year in history. It coincides with the rapid rise of deepfakes. In APAC alone, there was a 1530% rise in deepfakes from 2022 to 2023, according to a Sumsub report.

Photo Link | Istock | Getty Images

Ahead of the Indonesian elections on February 14, a video of the late Indonesian President Suharto advocating for the political party he once chaired has gone viral.

The AI-generated deepfake video that cloned his face and voice garnered 4.7 million views on X alone.

This was not an isolated incident.

In Pakistan, a deepfake of former Prime Minister Imran Khan appeared around the national elections, announcing that his party was boycotting them. Meanwhile, in the US, New Hampshire voters heard a deep rant from President Joe Biden asking them not to vote in the presidential primary.

Deepfakes of politicians are becoming increasingly common, especially with 2024 set to be the biggest global election year in history.

According to reports, at least 60 countries and more than four billion people will be voting for their leaders and representatives this year, which makes deepfakes a serious concern.

According to a Summarize the report in Novemberthe number of deepfakes worldwide increased 10x from 2022 to 2023. In APAC alone, deepfakes grew by 1,530% during the same period.

Online media, including social platforms and digital advertising, saw the largest increase in the rate of identity fraud at 274% between 2021 and 2023. Professional services, healthcare, transportation and video games were also among the industries affected by identity fraud.

Asia is not ready to deal with deepfakes in elections in terms of regulations, technology and education, said Simon Chesterman, senior director of AI governance at AI Singapore.

Inside Global Threat Report 2024cybersecurity firm CrowdStrike reported that with the number of elections scheduled this year, nation-state actors such as from China, Russia and Iran are most likely to conduct disinformation or disinformation campaigns to sow disruption.

“The most serious interventions would be if a major power decided they wanted to disrupt a country’s elections – that would probably have a bigger impact than political parties playing on the fringes,” Chesterman said.

Although several governments have tools (to prevent online falsehoods), the worry is that the genie will be out of the bottle before it can be pushed back.

Simon Chesterman

AI Singapore Senior Manager

However, most deepfakes will still be created by actors within the respective countries, he said.

Carol Soon, principal researcher and head of society and culture at the Institute for Policy Studies in Singapore, said domestic actors could include opposition parties and political rivals or the far right and left.

Deepfake risks

At the very least, deepfakes pollute the information ecosystem and make it harder for people to find accurate information or form informed opinions about a party or candidate, Soon said.

Voters may also turn away from a particular candidate if they see content about a scandalous topic that goes viral before it is debunked as fake, Chesterman said. “Although several governments have tools (to prevent online falsehoods), the concern is that the genie will be out of the bottle before there is time to push it back in.”

“We saw how quickly X could be taken over by the deep fake porn featuring Taylor Swift — these things can spread incredibly quickly,” he said, adding that regulation is often insufficient and incredibly difficult to enforce. “It’s often too late.”

Adam Meyers, head of adversarial operations at CrowdStrike, said deepfakes can also cause confirmation bias in people: “Even if they know deep down that it’s not true, if it’s the message they want and something they want to believe whatever it is I’m not going to let it go.”

Chesterman also said that fake footage showing bad behavior during elections, such as ballot stuffing, could make people lose confidence in the validity of the election.

On the other hand, candidates may deny the truth about themselves that might be negative or unflattering and attribute it to deepfakes, Soon said.

Who should be responsible?

We now realize that social media platforms need to take more responsibility because of the quasi-public role they play, Chesterman said.

In February, 20 leading technology companies, incl Microsoft, Meta, Google, Amazon, IBM as well as artificial intelligence startup OpenAI and social media companies such as Snap, TikTok and X have announced a joint commitment to combat the misleading use of artificial intelligence in elections this year.

The technology agreement signed is an important first step, Soon said, but its effectiveness will depend on implementation and enforcement. As tech companies adopt different measures across their platforms, a multi-pronged approach is needed, he said.

Tech companies should also be very transparent about the kinds of decisions that are made, for example, the kinds of processes that are put in place, Soon added.

But Chesterman said it’s also unreasonable to expect private companies to perform essentially public functions. Deciding what content will be allowed on social media is difficult to do, and companies can take months to decide, he said.

“We shouldn’t just rely on the good intentions of these companies,” Chesterman added. “That’s why regulations need to be put in place and expectations set for these companies.”

To that end, the Coalition for Content Provenance and Authenticity (C2PA), a non-profit organization, introduced digital credentials for contentwhich will show viewers verified information such as creator information, where and when it was created, and whether genetic artificial intelligence was used to create the material.

C2PA member companies include Adobe, Microsoft, Google and Intel.

OpenAI has announced that it will be implementation of C2PA content credentials in the images created with the DALL·E 3 offering earlier this year.

“I think it would be terrible if I said, ‘Oh yeah, I’m not worried. I feel great.’ Well, we’ll have to watch it relatively closely this year [with] extremely strict monitoring [and] extremely tight comments.”

In a Bloomberg House interview at the World Economic Forum in January, OpenAI founder and CEO Sam Altman said the company was “pretty focused” on making sure its technology wasn’t used to manipulate elections.

“I think our role is very different from the role of a distribution platform,” such as a social media site or a news publisher, he said. “We have to work with them, so it’s like create here and distribute here. And there has to be a good conversation between them.”

Meyers proposed the creation of a bipartisan, non-profit technical entity with the sole mission of analyzing and detecting deepfakes.

“The public can then send them content they suspect is being tampered with,” he said. “It’s not foolproof, but at least there’s some kind of mechanism that people can rely on.”

But ultimately, while technology is part of the solution, a large part of it depends on consumers, who aren’t ready yet, Chesterman said.

He soon also emphasized the importance of educating the public.

“We must continue our outreach and engagement efforts to foster a sense of vigilance and awareness when the public is exposed to information,” he said.

The public should be more careful. In addition to fact-checking when something is highly suspicious, users should also check critical information, especially before sharing it with others, he said.

“There’s something for everyone to do,” Sean said. “It’s all hands on deck.”

— CNBC’s MacKenzie Sigalos and Ryan Browne contributed to this report.