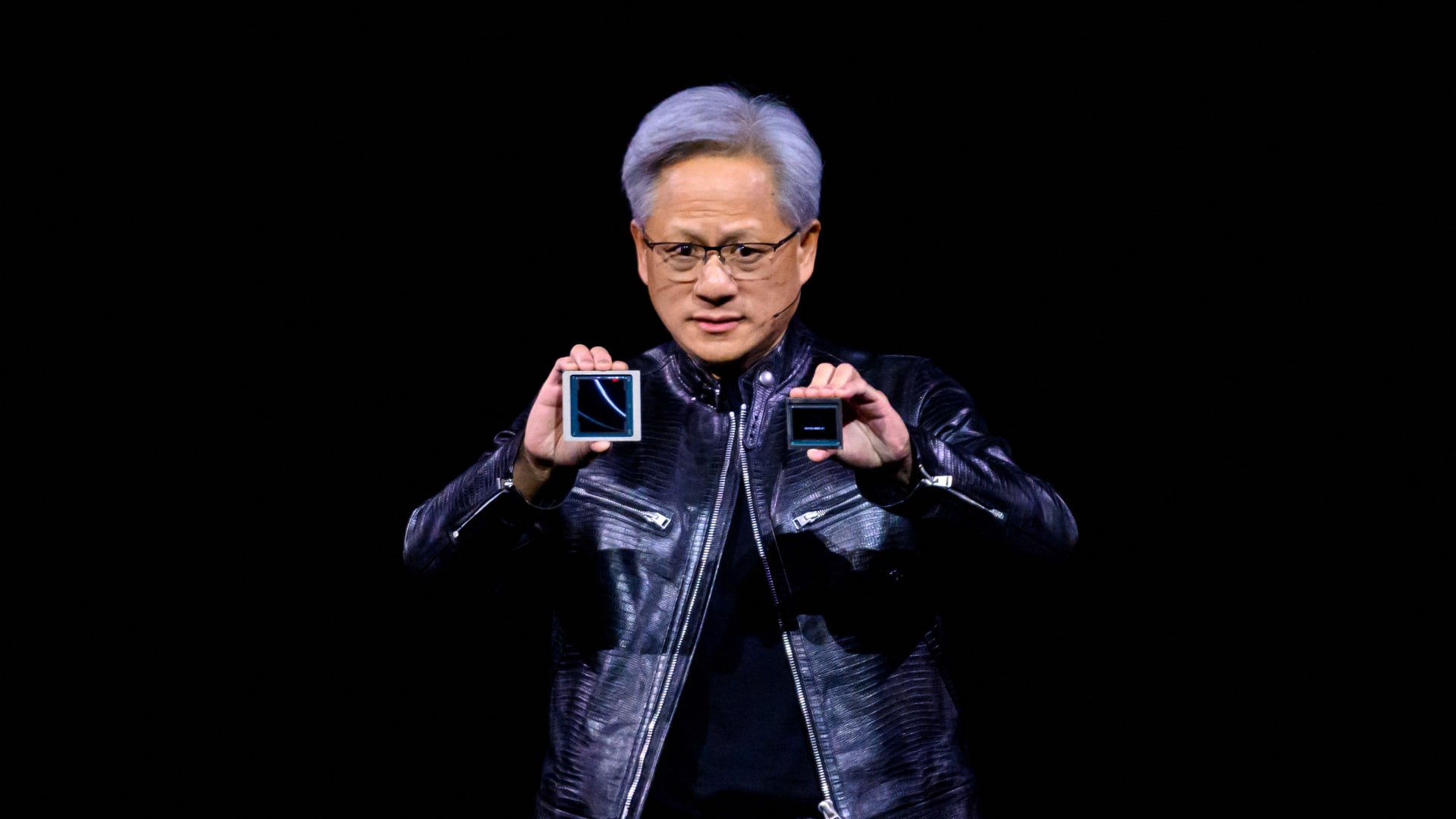

In the wake of the release of powerful new artificial intelligence chips from Nvidia, Goldman Sachs predicts significant growth for memory chips used in artificial intelligence systems. Dubbed Blackwell, Nvidia’s new GPU chips powering the AI models require the latest third-generation memory chips, also known as high-bandwidth memory (HBM/HBM3E). The investment bank expects the total addressable market for HBM to grow tenfold to $23 billion by 2026, from just $2.3 billion in 2022. The Wall Street bank sees three major memory makers as the main beneficiaries of the booming HBM market: SK Hynix, Samsung Electronics and Micron. All three stocks are also traded in the US, Germany and the UK Investors can also invest in the three stocks through exchange-traded funds. While the Invesco Next Gen Connectivity ETF (KNCT) holds all three stocks in a highly concentrated manner, the WisdomTree Artificial Intelligence and Innovation Fund (WTAI) has less than a 2% allocation to each. MU 1Y series Goldman Sachs said stronger AI demand led to an increase in AI server shipments and a higher density of memory chips per GPU – the chip that powers the AI - leading them to “significantly increase” their estimates. Goldman analysts led by Giuni Lee said in a March 22 note to clients that all three “will benefit from strong growth in the HBM market and close [supply/demand], as this leads to a continued significant HBM pricing premium and potential growth in each company’s overall DRAM margin.” Investors have been cautious about the memory market for AI systems as all three major vendors are set to expand capacity and add downward pressure on margins. However, Goldman analysts believe challenges such as larger chip sizes and lower production yields for HBM compared to conventional DRAM memory chips are likely to keep supply tight in the near term. The Wall Street bank is not alone in its view. Citi analysts had also advised clients similarly in February. “Despite market concerns about potential HBM oversupply as all three DRAM manufacturers enter the HBM3E space, we see firm supply tightness in the HBM3E space given increased demand from Nvidia and other AI customers amid limited supply growth in low performance and increased memory manufacturing complexity,” Citi analyst Peter Lee said in a Feb. 27 note to clients. Goldman also reported that suppliers said “their 2024 HBM capacity is fully committed, while 2025 supply has already been allocated to customers.” , the investment bank expects SK Hynix to maintain over 50% market share for at least the next few years, thanks to its “strong customer/supply chain relationship” and its technology, which is believed to have “better productivity and performance compared to with Goldman analysts also said Samsung Electronics has “an opportunity to gain market share in the medium term.” Earlier this month, Nvidia CEO Jensen Huang hinted during a media briefing that his company is in the process of approving Samsung Electronics’ latest HBM3E chips for its graphics processing units. Meanwhile, Micron could begin to outpace rivals in 2025 by narrowing its focus to the HBM3E standard, according to the investment bank. — CNBC’s Michael Bloom contributed to this report.